When I tried using iOS’s AVSampleBufferDisplayLayer in OGVKit last year, I had a few problems. Most notably a rendering bug on one device, and inability to display frames with 4:4:4 subsampling (higher chroma quality).

Since the rendering path I used instead is OpenGL-based, and OpenGL is being deprecated in iOS 12… figured it might be worth another look rather than converting the shader and rendering code to Metal.

The rendering bug that was striking at 360p on some devices was fixed by Apple in iOS 11 (thanks all!), so that’s a nice improvement!

Fiddling around with the available pixel formats, I found that while 4:4:4 YCbCr still doesn’t work, 4:4:4:4 AYCbCr does work in iOS 11 and iOS 12 beta!

First I swapped out the pixel format without adjusting my data format conversions, which produced a naturally corrupt image:

Then I switched up my SIMD-accelerated sample format conversion to combine the three planes of data and a fourth fixed alpha value, but messed it up and got this:

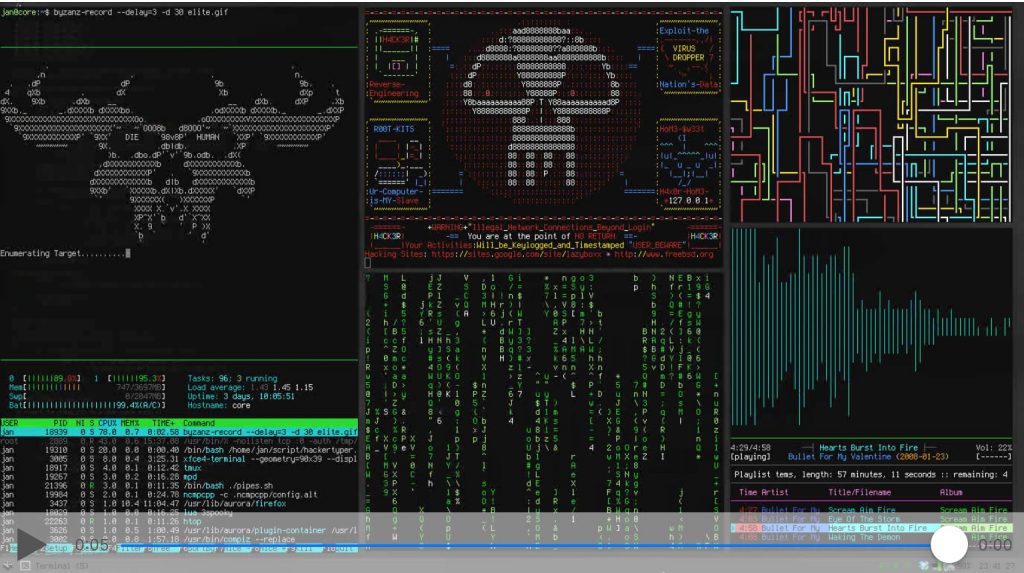

Turned out to be that I’d only filled out 4 items of a 16×8 vector literal. Durrrr. :) Fixed that and it’s now preeeeetty:

(The sample video is a conversion of a silly GIF, with lots of colored text and sharp edges that degrade visibly at 4:2:0 and 4:2:2 subsampling. I believe the name of the original file was “yo-im-hacking-furiously-dude.gif”)

A remaining downside is that on my old 32-bit devices stuck on iOS 9 and iOS 10, the 4:2:2 and 4:4:4 samples don’t play. So either I need to keep the OpenGL code path for them, or just document it won’t work, or maybe do a runtime check and downsample to 4:2:0.