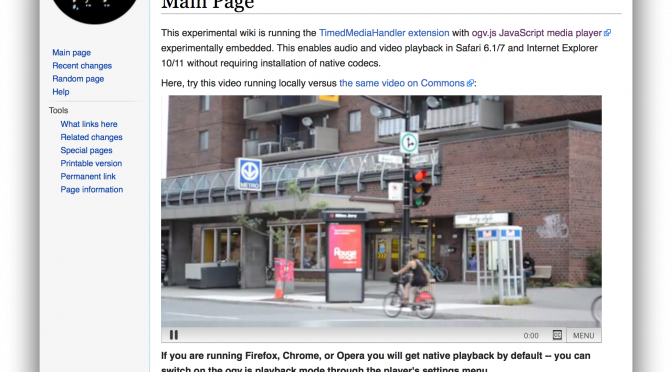

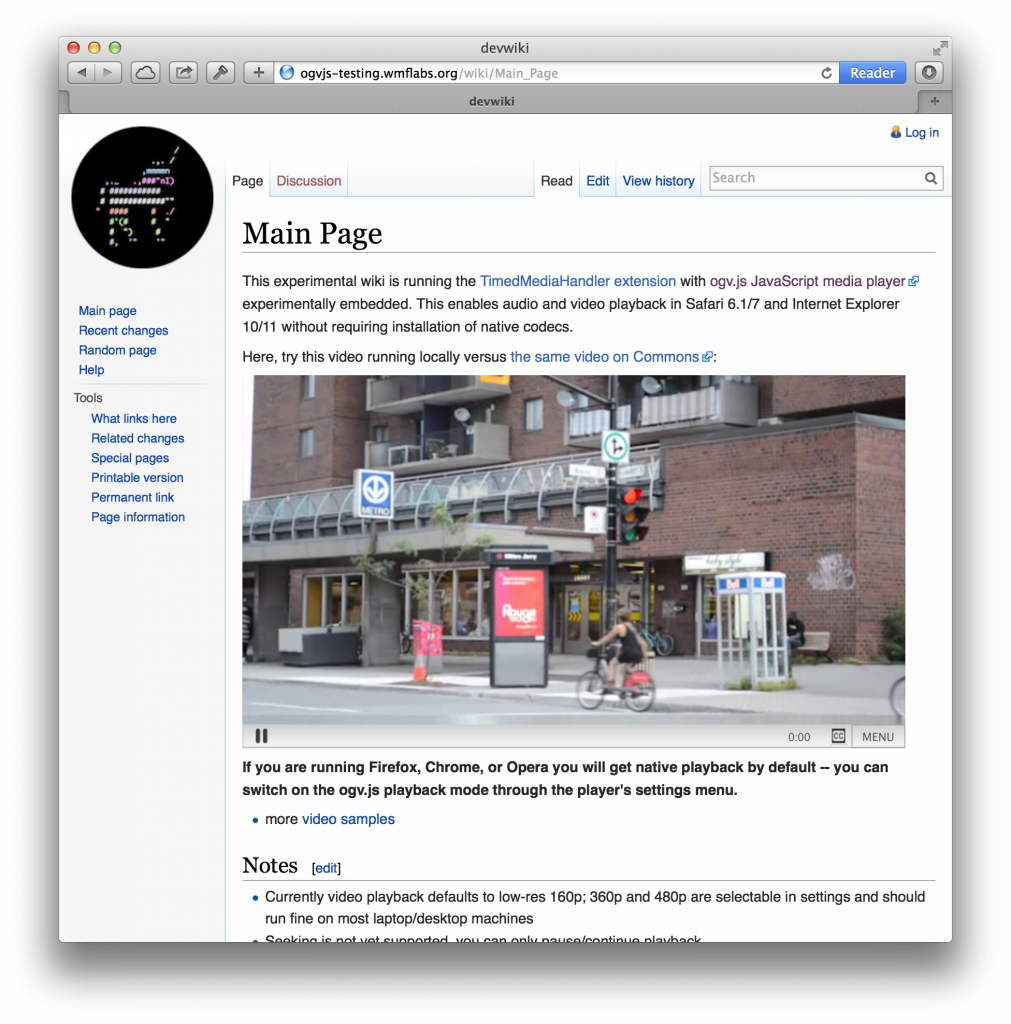

We’ve started deploying my ogv.js JavaScript video/audio playback engine to Wikipedia and Wikimedia Commons for better media compatibility in Safari, Internet Explorer and the new Microsoft Edge browser.

“It’s an older codec, but it checks out. I was about to let them through.”

This first generation uses the Ogg Theora video codec, which we started using on Wikipedia “back in the day” before WebM and MP4/H.264 started fighting it out for dominance of HTML5 video. In fact, Ogg Theora/Vorbis were originally proposed as the baseline standard codecs for HTML5 video and audio elements, but Apple and Microsoft refused to implement it and the standard ended up dropping a baseline requirement altogether.

Ah, standards. There’s so many to choose from!

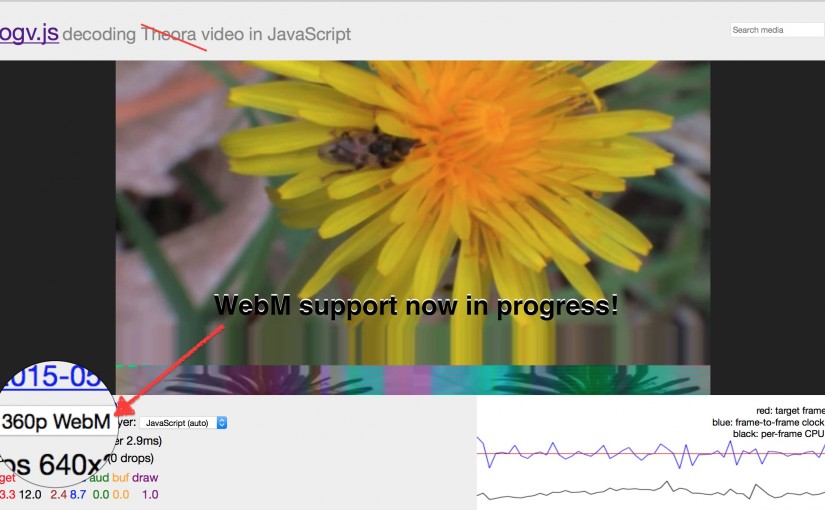

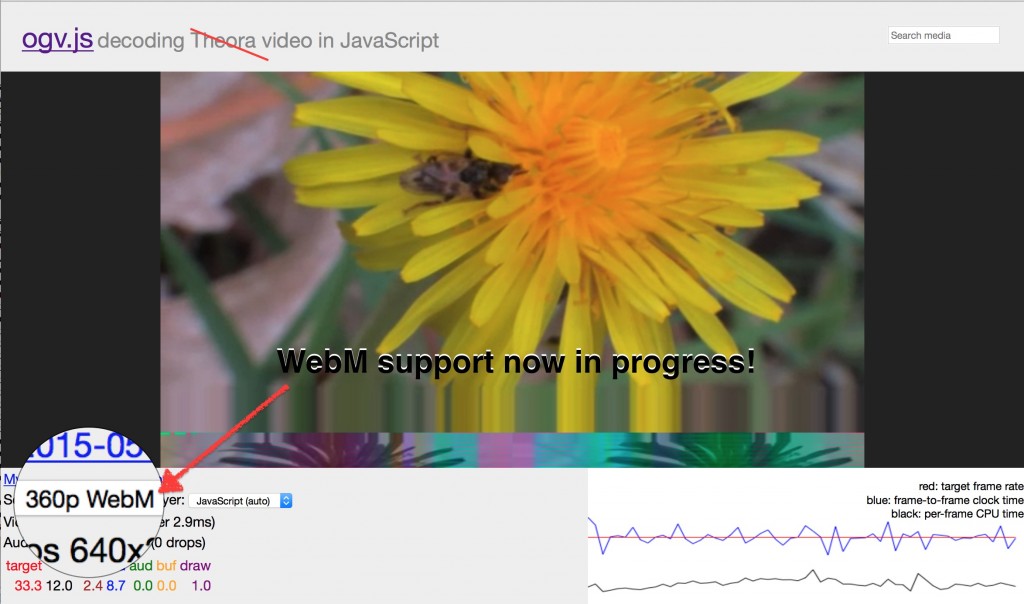

I’ve got preliminary support for WebM in ogv.js; it needs more work but the real blocker is performance. WebM’s VP8 and newer VP9 video codecs provide much better quality/compression ratios, but require more CPU horsepower to decode than Theora… On a fast MacBook Pro, Safari can play back ‘Llama Drama’ in 1080p Theora but only hits 480p in VP8.

That’s about a 5x performance gap in terms of how many pixels we can push…Â For now, the performance boost from using an older codec is worth it, as it gets older computers and 64-bit mobile devices into the game.

But it also means that to match quality, we have to double the bitrate — and thus bandwidth – of Theora output versus VP8 at the same resolution. So in the longer term, it’d be nice to get VP8 — or the newer VP9 which halves bitrate again — working well enough on ogv.js.

emscripten: making ur C into JS

ogv.js’s player logic is handwritten JavaScript, but the guts of the demuxer and decoders are cross-compiled from well-supported, battle-tested C libraries.

Emscripten is a magical tool developed at Mozilla to help port large C/C++ codebases like games to the web platform. In short, it runs your C/C++ code through the well-known clang compiler, but instead of producing native code it uses a custom LLVM backend that produces JavaScript code that can run in any modern browser or node.js.

Awesome town. But what are the limitations and pain points?

Integer math

Readers with suitably arcane knowledge may be aware that JavaScript has only one numeric type: 64-bit double-precision floating-point.

This is “convenient” for classic scripting in that you don’t have to worry about picking the right numeric type, but it has several horrible, horrible consequences:

- When you really wanted 32-bit integers, floating-point math is going to be much slower

- When you really wanted 64-bit integers, floating-point math is going to lose precision if your numbers are too big… so you have to emulate with 32-bit integers

- If you relied on the specific behavior of 32-bit integer multiplication, you may have to use a slow polyfill of Math.imul

Luckily, because of #1 above, JavaScript JIT compilers have gone to some trouble to optimize common integer math operations. That is, JavaScript engines do support integer types and integer math, just you don’t know for sure when you have an integer at the source level.

Did I say “luckily”? :P

So this leads to one more ugly consequence:

- In order to force the JIT compiler to run integer math, emscripten output coerces types constantly — “(x|0)” to force to 32-bit int, or “+x” to force to 64-bit float.

This actually performs well once it’s through the JIT compiler, but it bloats the .js code that we have to ship to the browser.

The heap is an island

Emscripten provides a C-like memory model by using Typed Arrays: a single ArrayBuffer provides a heap that can be read/written directly as various integer and floating point types.

However…

Because all pointers are indexes into the heap, there’s no way for C code to reference data in an external ArrayBuffer or other structure. This is obviously an issue when your video codec needs to decode a data packet that’s been passed to it from JavaScript!

Currently I’m simply copying the input packets into emscripten’s heap in a wrapper function, then calling the decoder on the copy. This works, but the extra copy makes me sad. It’s also relatively slow in Internet Explorer, where the copy implementation using Uint8Array.set() seems to be pretty inefficient.

Getting data out can be done “zero-copy” if you’re careful, by creating a typed-array subview of the emscripten heap; this can be used for instance to upload a WebGL texture directly from the decoder. Neat!

But, that trick doesn’t work when you need to pass data between worker threads.

Workers of the JavaScript world, unite!

Parallel computing is now: these days just about everything from your high-end desktop to your low-end smartphone has at least two CPU cores and can perform multiple tasks in parallel.

Unfortunately, despite half a century of computer science research and a good decade of marketplace factors, doing parallel programming well is still a Hard Problem.

Regular JavaScript provides direct access to only a single thread of execution, which keeps things simple but can be a performance bottleneck. Browser makers introduced Web Workers to fill this gap without introducing the full complexities of shared-memory multithreading…

Essentially, each Worker is its own little JavaScript universe: the main thread context can’t access data in a Worker directly, and the Worker can’t access data from the main context. Neither can one thread cause the other to block… So to communicate between threads, you have to send asynchronous messages.

This is actually a really nice model that reduces the number of ways you can shoot yourself in the foot with multithreading!

But, it maps very poorly to C/C++ threads, where you start with shared memory and foot-shooting and try to build better abstractions on top of that.

So, we’re not yet able to make use of any multithreaded capabilities in the actual decoders. :(

But, we can run the decoders themselves in Worker threads, as long as they’re factored into separate emscripten subprograms. This keeps the main thread humming smoothly even when video decoding is a significant portion of wall-clock time, and can provide a little bit of actual parallelism by running video and audio decoding at the same time.

The Theora and VP8 decoders currently have no inherent multithreading available, but VP9 can so that’s worth looking out for in the future…

Some browser makers are working on providing an “opt-in” shared-memory threading model through an extended ‘SharedArrayBuffer’ that emscripten can make use of, but this is not yet available in any of my target browsers (Safari, IE, Edge).

Waiting for SIMD

Modern CPUs provide SIMD instructions (“Single Instruction Multiple Data”) which can really optimize multimedia operations where you need to do the same thing a lot of times on parallel data.

Codec libraries like libtheora and libvpx use these optimized instructions explicitly in key performance hotspots when compiling to native code… but how do you deal with this when compiling via JavaScript?

There is ongoing work in emscripten and by at least some browser vendors to expose common SIMD operations to JavaScript; IÂ should be able to write suitable patches to libtheora and libvpx to use the appropriate C intrinsics and see if this helps.

But, my main targets (Safari, IE, Edge) don’t support SIMD in JS yet so I haven’t started…

GPU Madness

The obvious next thing to ask is “Hey what about the GPU?” Modern computers come with amazing high-throughput parallel-processing graphics units, and it’s become quite the rage to GPU accelerate everything from graphics to spreadsheets.

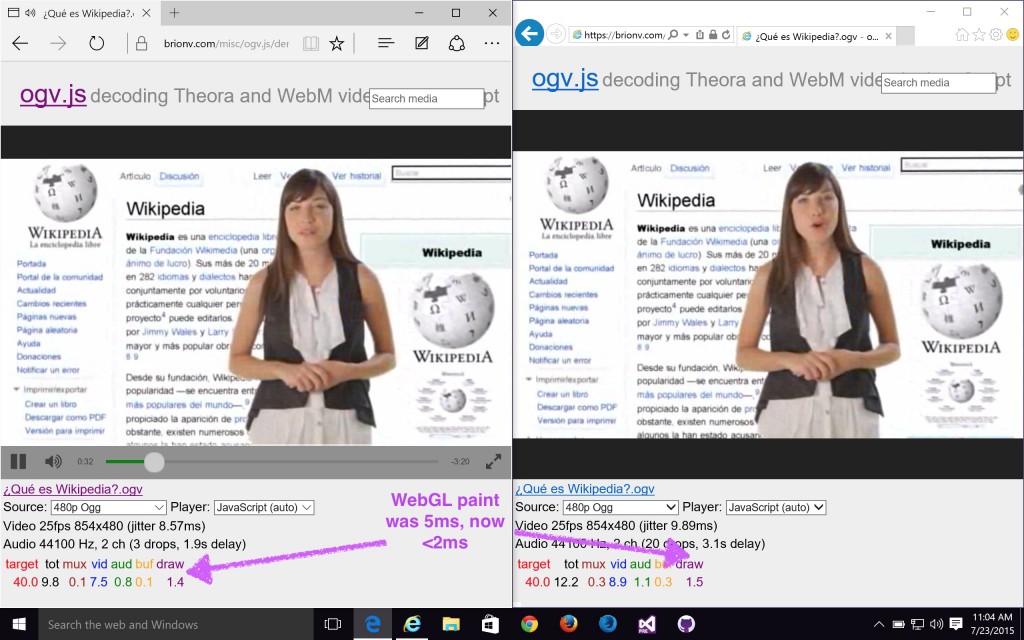

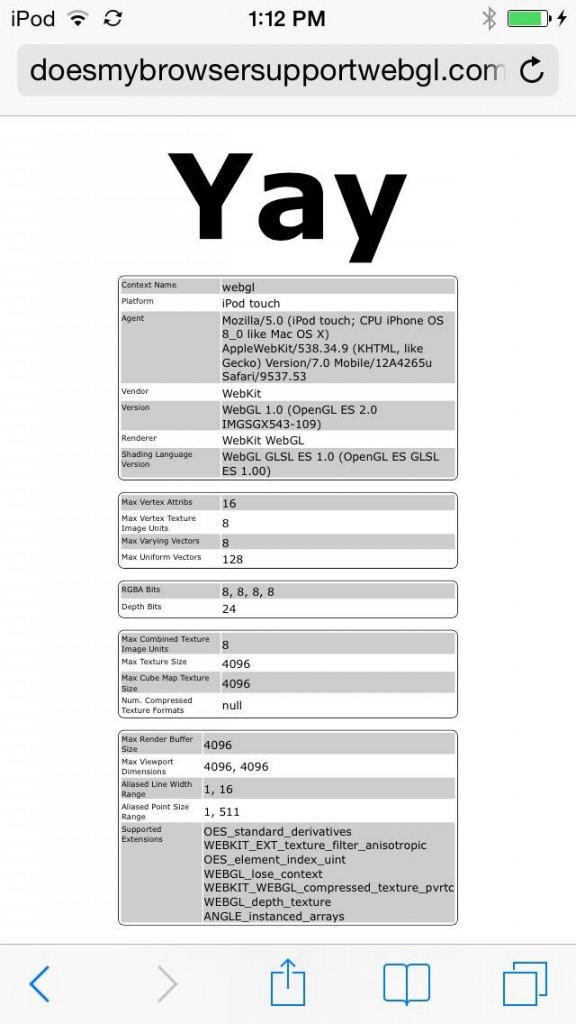

The good news is that current versions of all main browsers support WebGL, and ogv.js uses it if available to accelerate drawing and YCbCr-RGB colorspace conversion.

The bad news is that’s all we use it for so far — the actual video decoding is all on the CPU.

It should be possible to use the GPU for at least parts of the video decoding steps. But, it’s going to require jumping through some hoops…

- WebGLÂ doesn’t provide general-purpose compute shaders, so would have to shovel data into textures and squish computation into fragment shaders meant for processing pixels.

- WebGL is only available on the main thread, so if decoding is done in a worker there’ll be additional overhead shipping data between threads

- If we have to read data back from the GPU, that can be slow and block the CPU, dropping efficiency again

- The codec libraries aren’t really set up with good GPU offloading points in them, so this may be Hard To Do.

libvpx at least has a fork with some OpenCL and RenderScript support — it’s worth investigating. But no idea if this is really feasible in WebGL.

In the meantime, I’ve got lots of other things to fix in Wikipedia’s video support so will be concentrating on that stuff, but will keep on improving this as the JS platform evolves!