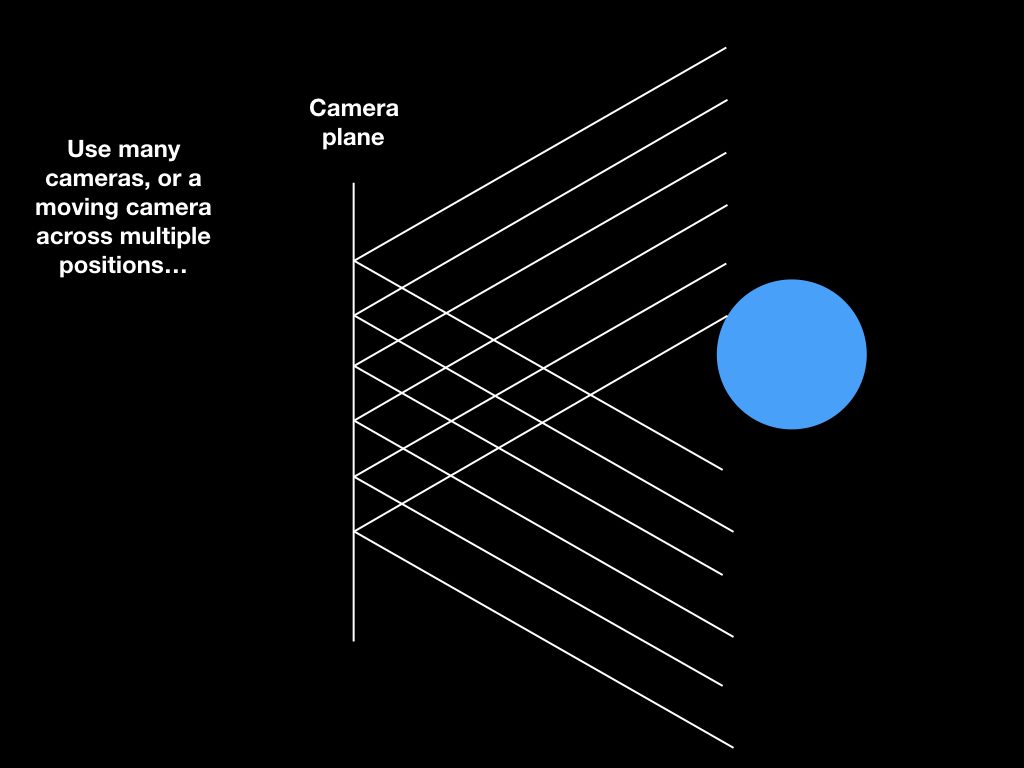

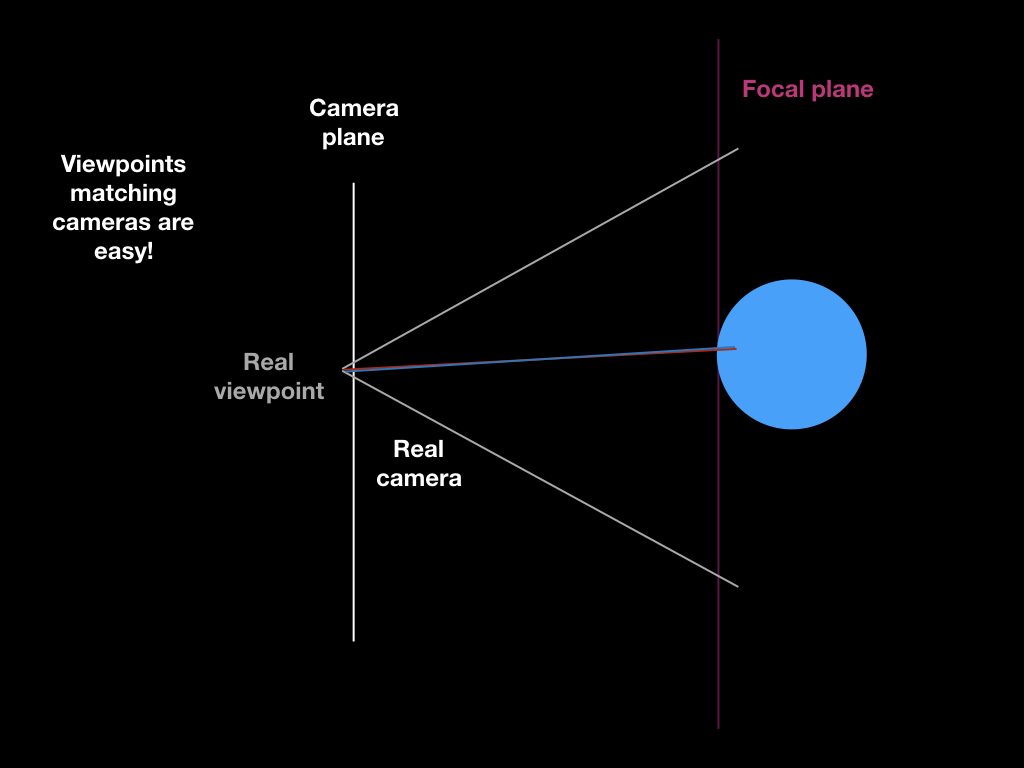

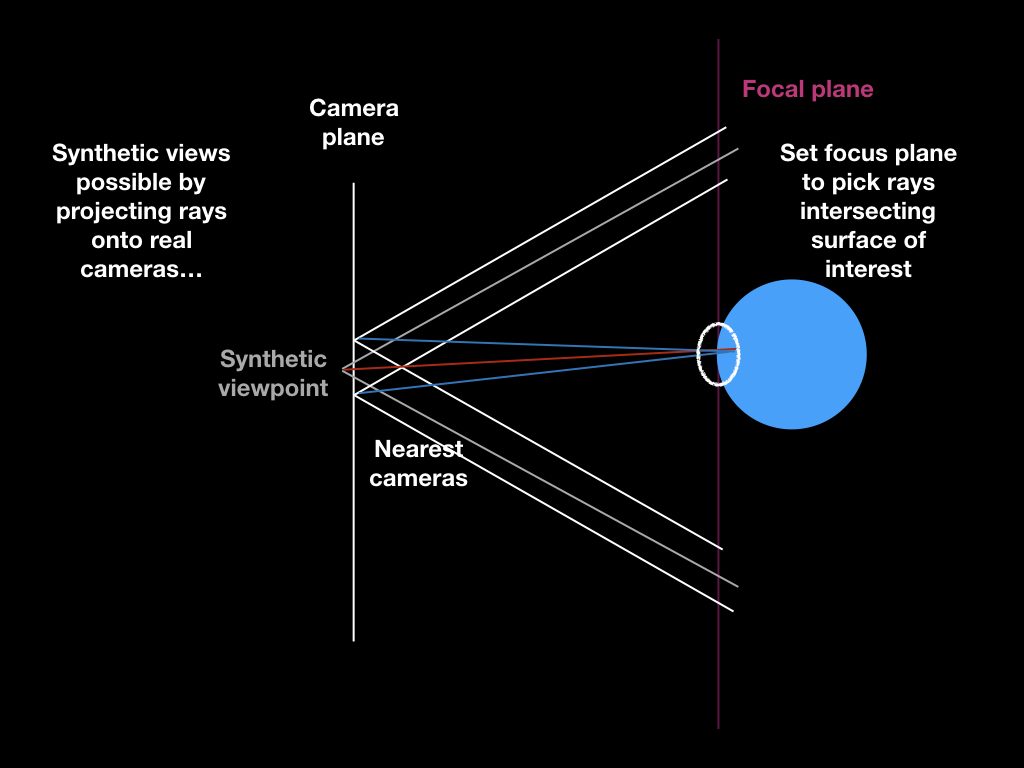

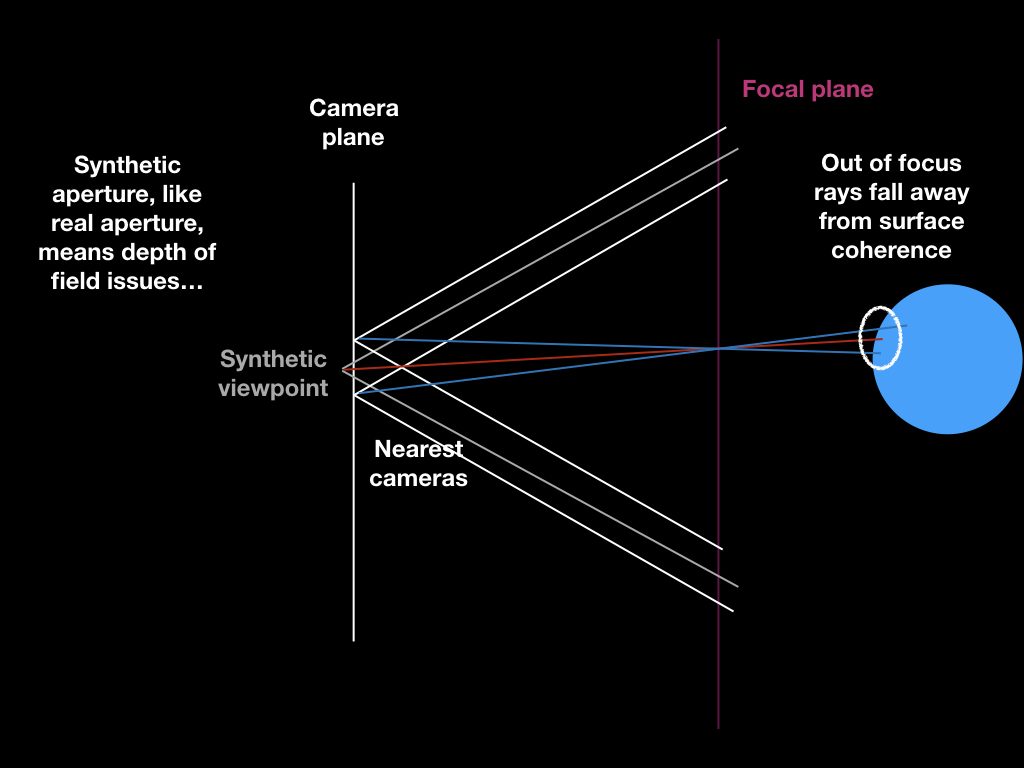

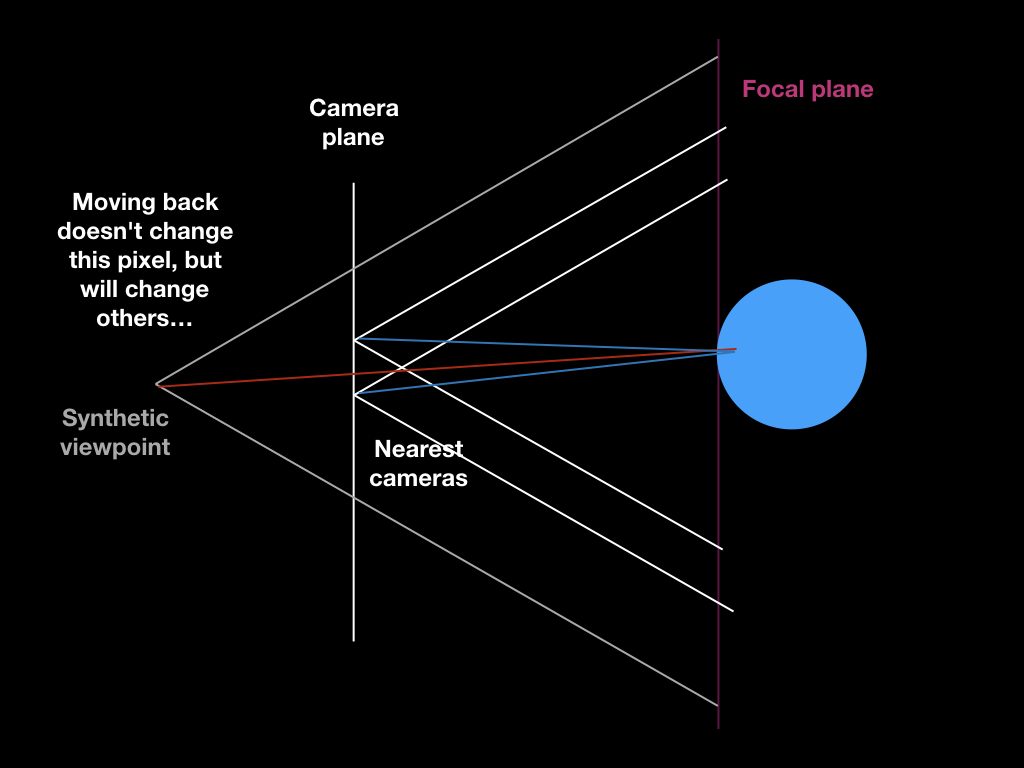

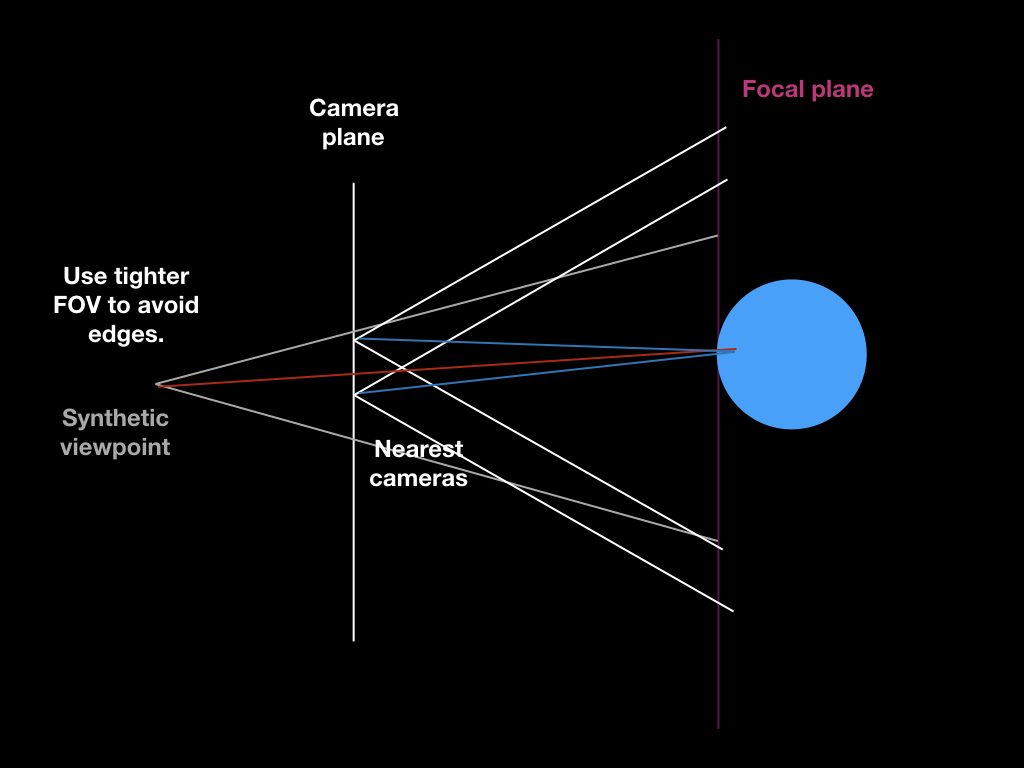

Been reading about light field rendering for free movement around 3d scenes captured as images. Made some diagrams to help it make sense to me, as one does…

Â

There’s some work going on to add viewing of 3d models to Wikipedia & Wikimedia Commons — which is pretty rad! — but geometric meshes are hard to home-scan, and don’t represent texture and lighting well, whereas light fields capture this stuff fantastically. Might be interesting some day to play with a light field scene viewer that provides parallax as you rotate your phone, or provides a 3d see-through window in VR views. The real question is whether the _scanning_ of scenes can be ‘democratized’ using a phone or tablet as a moving camera, combined with the spatial tracking that’s used for AR games to position the captures in 3d space…

Â

Someday! No time for more than idle research right now. ;)

Here, enjoy the diagrams.