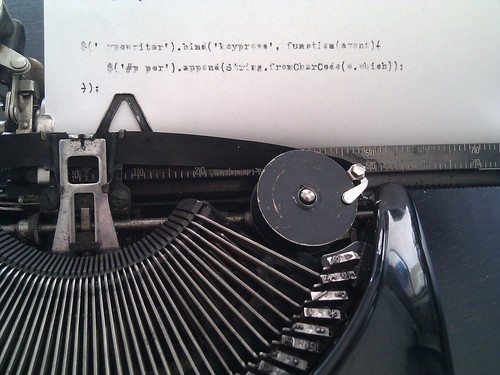

Typed scripting

Category: Uncategorized

Jean-Claude et Hercule

Execution plan

eat();

drink();

beMerry();

setTimeout(function() {

$('.we').die();

}, 24 * 3600 * 1000);

SyncMaster P

Yo I’m SyncMaster P and I’m here to say

your colors are all washed out but mine are bright as day!

My contrast is dynamic, 50,000:1

but your monitor’s all washed out kid, just face it you’re done.

I got the wide screen, yeah it’s 1080p

and you’re still impressed by that old DVD?

Just don’t be tempted by that darn technolust;

will a 27-incher come leave me in the dust?

I ain’t got USB or DisplayPort hacks,

but I’m still compatible with things that aren’t Macs!

Book review: Feed

Disclaimer: I know the author personally, which may mean I’m biased in favor of awesomeness.

Just finished up Feed, the first volume of Mira Grant’s epic zombie trilogy Newsflesh. If the words “epic zombie trilogy” put you off, you would do well to take a second look — this isn’t a horror hack-n-slash as much as it is a science-fiction political thriller, set in a near-future world transformed by 25 years of dealing with an infection that kills in minutes, then keeps the bodies moving to attack the living…

I grew up reading the science fiction classics: Asimov, Heinlein, Farmer, Niven, McAffrey… What always kept me reading late at night, eyes wide open, was their ability to craft a detailed world, working out the consequences of the big What If, and then tell a great story in it. Grant doesn’t disappoint; her post-Rising world is rich, weaving a gripping story from the societal consequences of a planet that has become quite legitimately paranoid.

Everything from home life to politics to the news and social interaction has been affected… after all, how wouldn’t a world where the dead attack the living be different? Where school safety is about keeping the children from gnawing each others’ faces off? Where it’s illegal to go outside the city without a weapon, or to come back in without uploading your blood test results to the CDC?

Most people stray from their homes as little as possible; online social networks have replaced most “in the flesh” socialization. Blogging, journalism, and reality television have merged as thrill-seekers risk their lives going outside to get the stories… or if they’re unlucky, to become them.

We dive into this world through the eyes of sibling internet journalists Georgia and Shaun Mason. Embedded with Senator Peter Ryman’s 2040 presidential campaign team on a dangerously old-fashioned nationwide tour, what could the Masons possibly uncover that’s more horrifying than the world they already live in?

Pick up a copy of Feed and you’ll find out… if you dare!

You may also enjoy…

If urban fantasy detective mysteries are more your speed, give the October Daye series a try, penned under Grant’s mundane name of Seanan McGuire.

Born to a Fey mother and human father, changeling Toby Daye always seems to end up getting the short end of both sticks. A former private detective trying to lay low after a particularly unpleasant magical transformation, she finds herself drawn back into the tricky — and deadly — games of Fairie politics in San Francisco, where murder spans two worlds…

Macroblogging

Man, I’ve been neglecting my regular blog lately. :)

Coming soon: MOBILE MADNESS!

- Heads-up on StatusNet’s upcoming desktop and mobile clients

- iPad review

- Nexus One / Android 2.1 review

- Where’s that damn roaming plan, anyway?

HTTP area codes

Ever notice that HTTP status codes and North American telephone area codes both have three digits?

Putting the media in Wikimedia!

I’m here in the city of lights for Wikimedia’s big Multimedia Usability meeting. We’ve got a fair chunk of our MediaWiki devs and folks with more of the media & communications organization end in one place to hash out some of the key issues and see what we can really accomplish in the short and medium term, including long-needed reworking of the upload interface and the workflow of manually tidying up metadata for newly uploaded files — sometimes coming in batches of many thousands!

Since my free time’s pretty low these next couple months I’m trying to keep my own commitments to where I can pack the most punch…

Things to do proof of concept coding to confirm our implementation theory:

- Metadata!

- Proof of concept for template/field/subtemplate extraction and mapping to RDF

- Try to organize w/ Robert about how to store and search the attached RDF fields in Lucene

- [Note I’ve been pulling existing exif data to get some stats about what can be pre-extracted; only about 7% of Commons files with EXIF data have a ‘Copyright’ field.]

Things to ponder specs on:

- Historical hit counters that I’ve always wanted, via metrics workgroup

- efficient storage

- efficient queries & charting

Other things to peek at and give some directional advice on:

- Check out what it’d take to integrate Geohack tools better (via Magnus)

- Take a peek at Unicode encoding & keyboard input problems for some languages requiring funky script support such as Malayalam (via Gerard)

Update: Also want to poke XMPP RC test setup per Duesentrieb. :D

Screen integration with terminals?

As a guy who spends a lot of time in remote Linux shells from a laptop, I’m looking for better integration between my terminal emulators and screen sessions.

- Let me use native scrollbars to access the backscroll!

- Start me in screen by default so I don’t forget to start one.

- If I have disconnected sessions, let me choose to reconnect or create a new session, with some reasonable menu.

- Automatically reconnect to the server and the screen session after network disruption (switching networks, sleeping the machine overnight, etc)

- Not messing up backspace. (This plagues me on Mac clients a lot. Backspace works fine in regular terminal but becomes forward delete in screen session. WTF?)

Linux & Mac clients both welcome… Anybody know something down this road already available?

Dell Mini love

We finally replaced my fiancée’s ancient PC with a shiny new Dell laptop. While ordering, I couldn’t help myself and tossed in a Inspiron Mini 9 for myself:

This little cutie weighs in at just 2.26 pounds, less than half of my MacBook’s hefty 5 pounds. I’ve found that the Mini is much more back-friendly than my MacBook; I can painlessly lug it to the office with my laptop bag slung over my shoulder (easier for getting on and off the subway) instead of nerding it up in backpack mode.

The top-end model I picked packs 16GB storage and 1GB RAM running on a 1.6 GHz Atom processor — far more powerful than the computer I took with me to college in 1997. Admittedly, my iPhone also beats that computer at 8GB/128MB/300MHz vs 6.4GB/64MB/266MHz. :P

The compact form factor does have some impact on usability, though. The 1024×600 screen sometimes feels too tight for vertical space, but they include a handy full-screen zoom hotkey for the window manager which opens things up.

The keyboard feels a bit cramped, and some of the keys are in surprising places (the apostrophe and hyphen are frequent offenders), but it’s still a lot easier to type serious notes or emails on than the iPhone. I had to disable the trackpad’s click and scrolling options to keep from accidentally pasting random text with my palms while typing…

The machine shipped with a customized Ubuntu distribution which is fully functional; they include a “friendly” launcher app which can be easily disabled, and even the launcher doesn’t interfere too badly. The desktop launch bar that’s crept into Gnome nicely handles my “I need Spotlight to launch stuff with the keyboard” fix. :) Firefox works fine (after uninstalling lots of Yahoo! extensions), Thunderbird installed easily enough, and I even got Skype to work with my USB headset! (AT&T’s international roaming charges can bite me…)

The biggest obstacle for me to use this machine every day is my Yojimbo addiction. I use Yojimbo for darn near everything — random notes, travel plans, budgeting, grocery lists, recipes, encrypted password stores, saving articles and documentation for future references. It’s insanely easy to use, the search works, I don’t have to remember where I saved anything, and it syncs across all my Macs. But… it’s Mac-only. :(

I’m trying out WebJimbo, which provides an AJAX-y web interface for remotely accessing your Yojimbo notes. It’s very impressive for what it does, but I’m hitting some nasty brick walls: editing a note with formatting drops all the formatting, but I use embedded screen shots and coloring extensively in my notes.

I’ve seen some reports of people hacking Mac OS X onto the Dell Mini — very tempting to avoid OS switching overhead. :) But I think if I really want that, eventually I should just suck it up and buy a MacBook Air. The form factor is the same as my MacBook (full keyboard, roomier 1280×800 screen), but at 3 pounds it’s much closer to the Mini than to my regular MacBook in weight, so should be about as back-friendly for the subway commute and air travel.

Of course, the Air costs $1799 and I got my tricked-out Mini for about $400, so… I’ll save my pennies and see. ;)